Customized Downloads with Rivet and Metakit

David N. Welton

davidw@dedasys.com

2005-08-30

Recently, I was faced with in interesting challenge in the course of creating a system for a client.

The system is large and complex, so I won't go into details on its overall use and functionality. The portion I was working on works like so: the users of the system, who have low to moderate technical skills, must download a client that runs on their own machines and communicates with the central server, which we manage. Periodically, the server sends instructions to the clients that they must carry out. In reality, in order to make the system as robust as possible, and to utilize existing protocols, we deploy the clients as CGIs that the users install on their web servers. It is also important that the communication protocol between client and server is, if not secure, at least robust. Requiring an SSL connection would lower the amount of people who could deploy our system, so it was decided to use a more direct method. It is not all that important to encrypt the data in transit, as its value is limited, but what we needed to avoid were spoofing attacks, where someone copies the protocol and uses it to send data to other users. We need to make sure that our central server is the only one sending out commands. Given that this software might run without any updates for months, or maybe even years, depending on a single IP or hostname for authentication was also not acceptable, and runs the risk of being spoofed in any case.

The whole system also has to be as easy as possible to download and install. Instructions like "now untar it and edit the config file with vi" are not going to fly with the system's end users! Very simple and spoof-proof were the major requirements.

Being a Tcl hacker, of course the first thing that came to mind when I understood the requirement for simplicity was Jean-Claude Wippler's "Tclkit" system. Using Tcl, the Metakit database system, some compression libraries, a dash of black magic and a lot of hackery, Tclkit lets you wrap up an entire application written in Tcl, spread over multiple scripts and even shared libraries, and distribute it as a single file, or if you want a bit more flexibility at the expense of simplicity, two files - one as a Tcl and Tk runtime, and the other as the application itself and any associated support packages. In this case, two files doubles the chances of having an inexperienced user make some sort of mistake, so I went with a single file executable "Starpack", as it's known. It just doesn't get any simpler than that - all they would have to do is take the file and put it in the right place and it does the rest.

But the second problem, defending against random people sending data to our client software, became all the more thorny at this point. The method we selected, using a one way hash algorithm to authenticate data (along with a few other tricks), requires a key to be placed in the executable, so that it can confirm the data sent to it is good. And naturally, each of our software's users, or even every computer they download it to should have its own key, so that one user can't control another's client. And thus, our problem - how to distribute a separate key to each user's client software in as simple a fashion as possible?

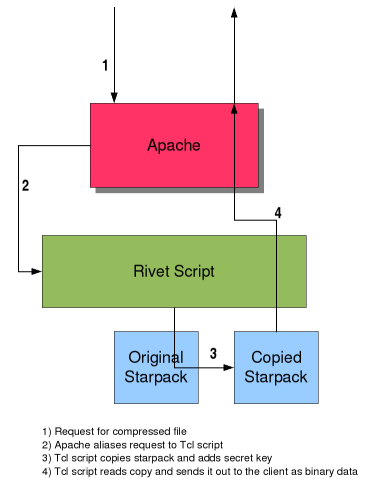

The answer turns out to be a clever use of Apache, Rivet, and

Metakit. Apache's role in the solution is the Alias directive,

which allowed us to invisibly redirect requests for

http://example.com/software.cgi.gz to

/web/server/path/makecgi.tcl on the local

filesystem. That got us to a point where we could script a

response to the request with Rivet (server side Tcl

for Apache), which was pretty simple actually. To just send out

data, all we had to do was open the real software.cgi file, and

hook that file descriptor up to Apache's out file descriptor

with the fcopy command, and watch the data flow. Of course, for

our purposes, another step is required in the middle in order to

do the crucial job of adding a key to the client software being

sent out.

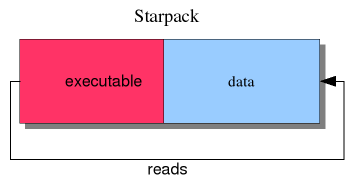

To understand exactly what is involved, we need to back up and

explain just how starpacks work. Tcl is normally spread all

over the filesystem, between the tclsh executable, the shared

library, and various support scripts loaded at 'boot' time.

That's fine, usually. Using a package manager like Debian's

apt, or rpms makes it easy to keep track of things, install and

uninstall them. We don't always have the luxury of nice tools,

though. Starpacks take a much more compact approach - they

statically compile Tcl and some other code into an executable

that starts reading from the end of itself when it is

run. To make this work, a data file is tacked on to the end of

the executable at build time. This data file contains

information on its structure and how large it is, so that the

initial read operation doesn't read past the end of the data

tacked onto the executable and start reading the binary file

itself.

To understand exactly what is involved, we need to back up and

explain just how starpacks work. Tcl is normally spread all

over the filesystem, between the tclsh executable, the shared

library, and various support scripts loaded at 'boot' time.

That's fine, usually. Using a package manager like Debian's

apt, or rpms makes it easy to keep track of things, install and

uninstall them. We don't always have the luxury of nice tools,

though. Starpacks take a much more compact approach - they

statically compile Tcl and some other code into an executable

that starts reading from the end of itself when it is

run. To make this work, a data file is tacked on to the end of

the executable at build time. This data file contains

information on its structure and how large it is, so that the

initial read operation doesn't read past the end of the data

tacked onto the executable and start reading the binary file

itself.

The data file is a Metakit database with compressed data. Metakit is a unique database system that lies somewhere between full on relational databases that utilize SQL, and very simple systems like Berkeley DB. Starpacks, and their runtime cousins, Starkits, contain Tcl code that lets us view a Metakit database as a "Virtual File System" - we can mount it, cd to it, open it up and write files.

This is the trick that we also use to write the unique key to

each file as it goes out. Before pushing the data out to the

user's browser, we make a copy of an original starpack, open it

up, write a new key inside it, compress it, then send the

finished product out. The user notices none of this, as it

happens very quickly and transparently, and the file arrives as

if it were simply a file downloaded from the web server.

This is the trick that we also use to write the unique key to

each file as it goes out. Before pushing the data out to the

user's browser, we make a copy of an original starpack, open it

up, write a new key inside it, compress it, then send the

finished product out. The user notices none of this, as it

happens very quickly and transparently, and the file arrives as

if it were simply a file downloaded from the web server.

Of course, you could use this trick with regular old executables too, but it would be much more difficult, because you have to know the exact location in the file to write to, and you must be very careful not to write too much. Furthermore, you have to recalculate everything each time your executable changes, and for each and every platform you ship for. With the metakit approach, we can script the whole thing in a few lines of Tcl, and it doesn't matter whether we're sending out a Linux or a Windows version - the only thing that changes is the base that it's built on.

The end result is therefore just as simple to use as ever, and resistant to trivial attacks. There certainly exist security protocols that would be more bullet-proof than the one we used, but we feel this is a good compromise between creating something that is quick and easy to use, and something that has no security at all.